Are you weighing speed-to-prototype against production-grade, stateful workflows in 2026? You’re not alone. Pressure to deliver AI impact is surging: generative AI could add $2.6–$4.4 trillion in annual economic value, and controlled studies show developers complete coding tasks up to 55% faster.

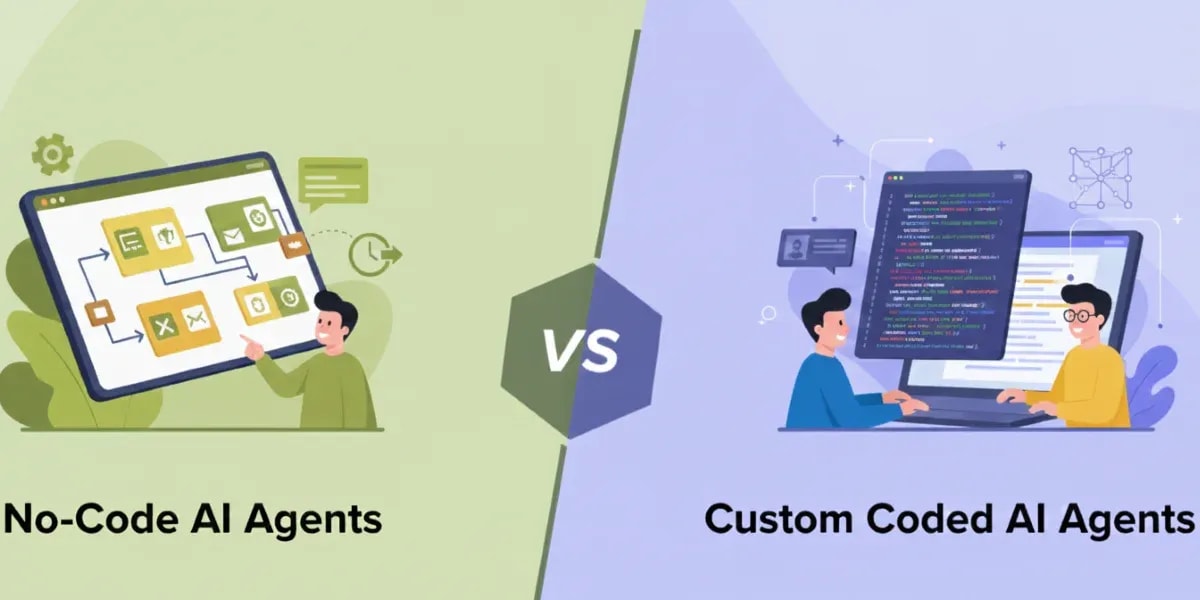

AI leaders evaluating agent orchestration in 2026 face a practical trade-off: speed-to-prototype versus production-grade, stateful workflows. LangChain remains excellent for linear AI pipelines like chatbots and RAG, while LangGraph, built on a node-and-edge model with persistent state, dominates complex, multi-agent systems. In short: if you need fast experiments and simple flows, choose LangChain; for robust, cyclic, and recoverable agent orchestration at scale, LangGraph usually wins.Quick comparison at a glance:

Dimension | LangChain | LangGraph |

Architecture | Sequential chains (DAG) using LCEL | Cyclic graph with nodes, edges, and shared state |

Best for | Chatbots, RAG, summarization, MVPs | Multi-agent systems, stateful workflows, enterprise AI |

State management | External or ad hoc | Persistent, first-class, with rollback support |

Loop and branching support | Limited, requires workarounds | Native, built-in |

Learning curve | Low to moderate | Moderate to steep |

Human-in-the-loop | Manual implementation | Native checkpoint support |

LangChain is an open-source framework for building LLM-powered applications using modular, sequential workflows. Originally launched in late 2022 by Harrison Chase, it connects prompts, tools, retrievers, and LLM systems into linear pipelines using its declarative orchestration syntax called LCEL (LangChain Expression Language). LangChain simplifies chaining prompts, tools, and retrievers for tasks like chatbots, document QA, and summarization, making it ideal for rapid prototyping and straightforward AI pipelines. Its rich component library, including document loaders, text splitters, vector store connectors, and model interfaces, has made it one of the most widely adopted LLM frameworks, with integrations spanning OpenAI, Anthropic, Hugging Face, and hundreds of other providers.

LangGraph is a graph-based orchestration framework built on top of LangChain, designed specifically for multi-agent systems, loops, branching, and long-lived, stateful workflows. Released in 2023 and reaching its stable v1.0 milestone in September 2025, it treats your application as a directed cyclic graph of nodes (steps) and edges (transitions), enabling sophisticated control over agent behavior and reliable execution with persistent state. Unlike LangChain’s directed acyclic graph (DAG) structure, LangGraph natively supports cycles — meaning agents can loop back, revisit decisions, and adapt their behavior based on evolving conditions. It is a graph-based orchestration framework purpose-built for complex, enterprise-grade agentic AI.

For enterprise teams, this distinction matters: agentic AI projects that demand concurrency, retries, memory, and governance will benefit from LangGraph’s stateful workflows and precise agent orchestration, while simpler, stateless tasks often reach value faster with LangChain. Both frameworks are part of the same ecosystem; LangChain provides the foundational components (models, tools, retrievers), while LangGraph adds the stateful orchestration layer on top. Teams don’t have to choose one or the other; they can and frequently do use both together.

From LangChain workflows to LangGraph-powered multi-agent systems, our AI engineers build scalable AI solutions tailored for enterprise applications, automation, and advanced decision-making.

Explore AI Agent SolutionsControl flow in LLM frameworks describes how tasks and model calls advance across steps, whether sequentially or with branching, loops, retries, timeouts, and failover. It dictates how your AI pipeline behaves under real-world conditions.

LangChain emphasizes sequential chains and tool-augmented prompts, orchestrated through LCEL (LangChain Expression Language), its declarative pipe-based syntax for wiring components together. You typically design a linear pipeline, structured as a directed acyclic graph (DAG), meaning tasks execute in a fixed order with no loops, such as query → retrieve → synthesize, with optional conditional logic. This pattern is fast to implement and easy to reason about for deterministic flows.

Here’s what a basic LangChain LCEL pipeline looks like:

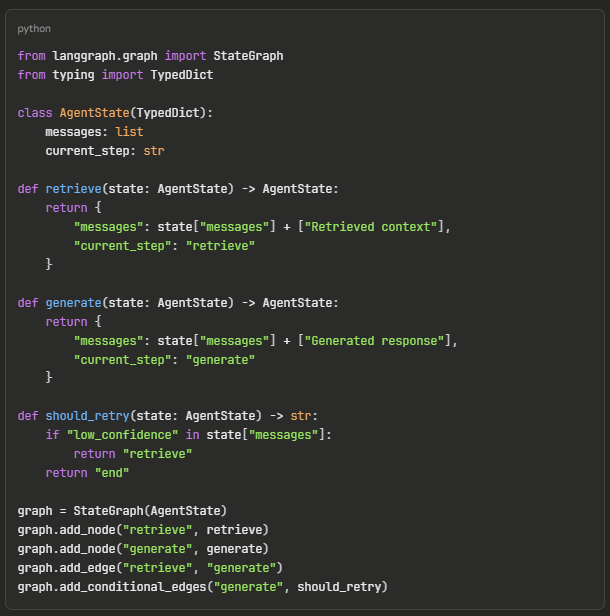

LangGraph models applications as graphs with explicit nodes, edges, and a shared state object. It natively supports cycles, branching, and asynchronous fan-out/fan-in, enabling complex multi-agent systems and robust recovery strategies. This results in more control over long-running, stateful workflows and fine-grained observability across steps.

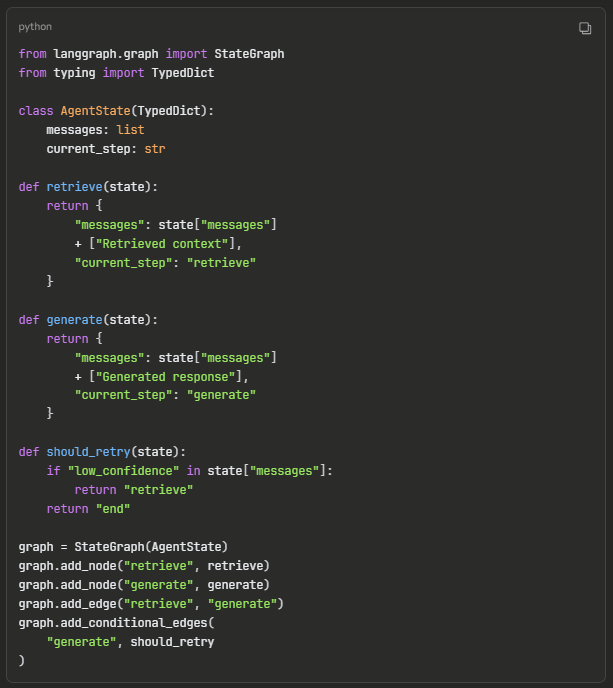

Here’s how a basic LangGraph workflow is structured:

Notice the key difference: LangChain’s LCEL gives you a clean, linear pipeline where data flows in one direction. LangGraph’s graph structure supports conditional edges and cycles, so the agent can loop back from generate to retrieve based on runtime conditions, something that isn’t possible in a DAG.

In practice, LangChain speeds up initial builds but can become cumbersome as requirements for branching, retries, or multi-agent coordination grow. As orchestration needs increase, custom glue code proliferates and maintainability drops; a common pain point for teams trying to scale LangChain beyond simple use cases. LangGraph shifts complexity into a formal graph abstraction, trading a steeper learning curve and added orchestration overhead for simple tasks for better maintainability in production-scale agent orchestration.

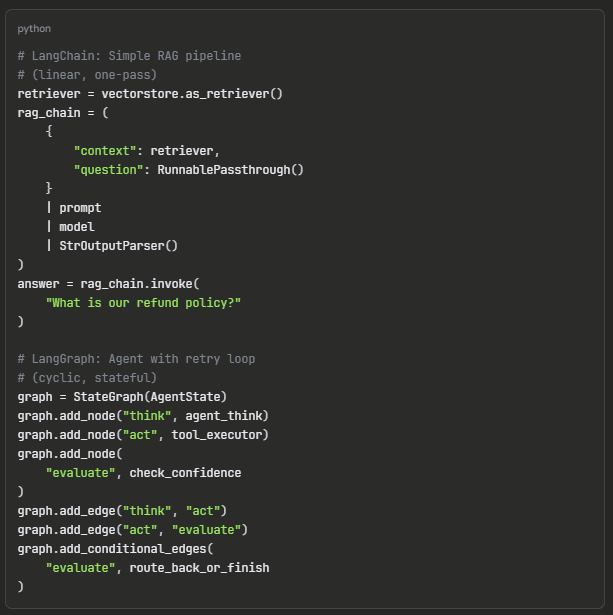

A minimal LangChain RAG chain vs. a LangGraph agent loop illustrates the difference in practice:

The RAG chain runs once, start to finish. The agent loop can cycle through think → act → evaluate multiple times until it reaches a confident answer — exactly what complex enterprise tasks require.

Learn how modern AI agents use memory, reasoning, and tool integrations to automate complex workflows and enterprise decision-making.

Read the AI Agent GuideFor context: LangChain was launched as an open-source project in October 2022 by Harrison Chase and quickly became the default framework for composing LLM applications, attracting hundreds of integrations and an active community. LangGraph emerged in 2023 to address the growing need for stateful, non-linear agent orchestration that LangChain’s chain-based model couldn’t cleanly support. By September 2025, LangGraph reached v1.0, the first stable major release in the durable agent framework space, signaling production-readiness for enterprise adoption.

Whether you're exploring LangChain, LangGraph, or advanced multi-agent architectures, our AI specialists can help you design, develop, and deploy production-ready AI agents that scale with your business needs.

Schedule an AI ConsultationDimension | LangChain | LangGraph | 2026 verdict |

Design model | Sequential chains (DAG) with LCEL | Node-and-edge cyclic graph with shared state | Graph wins for complex systems |

Control flow | Mostly linear with conditional steps | Native loops, branching, subgraphs | Graph for orchestration depth |

State | Externalized or ad hoc | Persistent, first-class state with rollback | Graph for reliability/audit |

Multi-agent | Possible, more manual | Built for multi-agent coordination | Graph |

Human-in-the-loop | Manual implementation required | Native checkpoint support | Graph |

Observability | Add-on logging/tracing | Graph-native traces via LangSmith | Graph |

Error handling | Chain-style propagation | Node-level retries, timeouts, circuit breakers | Graph |

Learning curve | Low — LCEL is minimal | Moderate — graph modeling patterns | LangChain |

Community maturity | Large, established since 2022 | Growing, v1.0 stable since Sept 2025 | LangChain (for now) |

Speed to MVP | Very fast | Moderate | LangChain |

Scaling complex workflows | Increasing complexity | Predictable structure | Graph |

Best fit | Chatbots, RAG, summaries | Stateful, governed agentic AI | Depends on need |

Folio3 AI builds custom, enterprise-grade agentic systems that integrate with legacy stacks, comply with governance, and scale reliably — turning LLM potential into measurable business outcomes. Whether your architecture calls for LangChain’s speed-to-value, LangGraph’s stateful orchestration, or a hybrid approach combining both, our team designs and deploys the right framework for your specific requirements.

Our offerings:

Discover real enterprise workflows powered by AI agents—from research automation to decision-making systems built with modern AI frameworks.

Explore AI Agent WorkflowsLangChain is an open-source framework for building LLM-powered applications using modular, sequential workflows. It uses LCEL (LangChain Expression Language) to chain prompts, retrievers, tools, and models into linear pipelines — ideal for rapid prototypes, chatbots, straightforward RAG, and document QA use cases.

LangGraph is a graph-based orchestration framework built on top of LangChain, designed for complex, stateful, multi-agent workflows. It models applications as directed cyclic graphs of nodes and edges with persistent shared state, enabling loops, branching, retries, and human-in-the-loop checkpoints that LangChain’s linear chain model cannot natively support.

LangGraph is better for multi-agent systems. Its graph architecture natively supports coordination among multiple agents through shared state, explicit transitions, and conditional routing, allowing agents to collaborate, hand off tasks, and adapt to each other’s outputs without custom glue code.

Yes, for simple, stateless assistants and single-agent RAG pipelines. For complex, governed workflows requiring retries, auditability, rollback, and multi-agent coordination, LangGraph is typically more maintainable and production-ready.

Absolutely. Many teams prototype in LangChain and move complex paths to LangGraph while reusing the same models, tools, retrievers, and data components. Since LangGraph is built on LangChain, there is no need to rewrite existing components — you add a stateful orchestration layer on top of what you’ve already built.

LCEL (LangChain Expression Language) is LangChain’s declarative syntax for composing workflows. It uses a pipe operator ( | ) to chain components together — for example, prompt | model | output_parser — making it easy to build, read, and modify linear pipelines with minimal boilerplate code.

Yes. LangGraph is a specialized extension within the LangChain ecosystem, created by the same team. It uses LangChain’s foundational components (model interfaces, retrievers, tools) but adds a graph-based runtime for stateful orchestration, loops, and multi-agent coordination that LangChain’s chain model doesn’t natively support.

LangChain agents follow a linear reasoning loop (think → act → observe) with limited control over branching and retries. LangGraph agents operate within an explicit graph structure where each step, transition, and fallback is defined as a node or edge — providing more granular control over agent behavior, better error handling at the node level, and native support for multi-agent collaboration.

Consider migrating when your LangChain application starts requiring frequent custom workarounds for branching logic, retries, state persistence across sessions, multi-agent coordination, or human-in-the-loop approval gates. If your codebase is accumulating custom glue code to handle non-linear flows, that’s a strong signal to move critical paths into LangGraph’s formal graph abstraction.

No. LangGraph complements LangChain, not replaces it. LangChain provides the foundational component library (models, tools, retrievers, prompt templates), while LangGraph adds stateful, graph-based orchestration on top of it. Most production systems use both — LangChain for components and simple flows, and LangGraph for complex workflows that require loops, state, and multi-agent coordination.