5 Ways Custom Generative AI Boosts ROI 2026

Custom generative AI helps businesses increase ROI by improving efficiency, reducing operational costs, and delivering more tailored, scalable outcomes in 2026.

What if you could access the same AI capabilities powering billion-dollar products, without paying a single licensing fee?

That's precisely what open-source generative AI models offer. These freely available systems now match proprietary giants like GPT-4 and Midjourney, putting powerful technology in the hands of solo developers and Fortune 500 companies alike. According to Markets and Markets, the global generative AI market is projected to grow from USD 71.36 billion in 2025 to USD 890.59 billion by 2032, at a CAGR of 43.4%. Open-source models are fueling much of this growth because they give you transparency, customization, and cost savings that closed alternatives simply can't.

Here's what's changed: tasks that used to need million-dollar budgets and specialized data centers now run on gaming laptops. You don't need expensive API calls anymore; you can download a model, tweak it for your needs, and run it locally while keeping your data completely private.

Open models give you downloadable weights that you can run on your own infrastructure, modify, and (depending on the license) deploy commercially. This transparency lets you examine exactly how a model works, adapt it to your requirements, and maintain full control over your data. You avoid recurring API costs and eliminate the risk of a vendor changing terms, raising prices, or discontinuing a product you depend on.

Closed-source models like GPT-4, Claude, and Midjourney keep their code, training methods, and datasets proprietary. You interact through APIs without seeing what's under the hood. These models often deliver excellent performance because their creators invest massive resources into development, but that comes at a cost. You pay per token or per image, which adds up fast at scale. You're also dependent on the vendor for updates, uptime, and support.

The performance gap between open and closed models has narrowed dramatically. In 2023, open models trailed significantly behind GPT-4. By 2025, models like LLaMA 4 405B and Qwen 2.5 72B match or exceed GPT-4 on many benchmarks. For specific tasks like coding, some open models now outperform their proprietary counterparts entirely.

Our AI architects have deployed LLaMA, Mistral, and Qwen across healthcare, finance, and logistics operations. Get a free model selection consultation.

Talk to an AI ExpertMeta's LLaMA 4 family represents the current pinnacle of open-weight large language models. Building on the success of LLaMA 3, this generation pushes capabilities even further with improved reasoning, expanded context windows, and better instruction following. The 405B parameter version competes directly with GPT-4 and Claude on virtually every benchmark.

General-purpose text generation, chatbots, content creation, and reasoning tasks. If you're under 700M MAU and can accept the license terms, this is one of the most capable options available.

Black Forest Labs, the team behind the original Stable Diffusion, released FLUX.1 as the largest and most capable open-source image generation model available. With 12 billion parameters, it produces visuals that genuinely rival Midjourney and DALL-E 3. The [schnell] variant is the only version with a truly permissive license for commercial use.

Commercial image generation applications, marketing content, and product visualization. The Apache 2.0 license makes it safe for any business use.

DeepSeek AI's coding model has become a favorite among developers who want powerful code generation without API costs. The V2 version dramatically expands capabilities with a Mixture-of-Experts architecture that activates only 21 billion parameters per token out of a total 236 billion, delivering excellent performance with reasonable compute requirements.

Code generation, completion, documentation, and debugging across virtually any programming language. The permissive license makes it suitable for commercial development tools.

Alibaba's latest vision-language model handles text, images, and video within a unified architecture. It rivals GPT-4o and Gemini on multimodal benchmarks while offering permissive licensing for smaller variants. The model can analyze documents, interpret charts, understand screenshots, and even act as a GUI agent that operates software interfaces.

Document processing, visual question answering, screenshot analysis, and GUI automation. The Apache 2.0 license on smaller variants makes them ideal for commercial multimodal applications.

Mistral AI, a French startup, has rapidly become a major force in open-source AI by focusing on efficiency. Their 7B model delivers remarkable performance relative to its size with a truly permissive Apache 2.0 license, making it one of the safest choices for commercial deployment.

Production applications where you need a capable model with absolutely no licensing restrictions. Chatbots, content generation, and applications where the Apache 2.0 license matters for legal clarity.

Mistral's Mixture-of-Experts model delivers near-GPT-4 performance at a fraction of the compute cost. It uses 46.7B total parameters but only activates 12.9B per token, giving you large-model quality with mid-sized model efficiency.

Applications that need near-frontier performance with Apache 2.0 licensing certainty. A strong choice when Mistral 7B isn't capable enough, but you need unrestricted commercial use.

Stability AI's latest generation brings significant improvements in image quality, text rendering, and prompt following. It benefits from a massive ecosystem of tools, fine-tunes, and community knowledge, though commercial use requires a paid membership.

Image generation where you can afford the membership fee, or non-commercial/research use. The ecosystem advantages are substantial; you'll find tutorials and tools for virtually any workflow.

OpenAI open-sourced Whisper as a speech recognition model that transcribes audio across 99 languages with remarkable accuracy. It's become the backbone of countless transcription services, accessibility tools, and voice interfaces, with a truly permissive MIT license.

Any audio transcription needs, podcasts, meetings, accessibility, and voice interfaces. The MIT license makes it safe for any use case.

Alibaba's text-only language model competes at the highest level while offering some of the best multilingual support in the open-source world. Smaller variants use Apache 2.0 licensing, making them excellent choices for commercial deployment.

Requirements: None for Apache 2.0 variants

Multilingual applications, or any use case where you need Apache 2.0 licensing certainty. The range of sizes makes it adaptable to virtually any deployment scenario.

BigCode's community-driven code model comes from an open scientific collaboration between ServiceNow, Hugging Face, and NVIDIA. Trained transparently on permissively licensed code, it offers strong coding assistance with clear licensing and ethical guidelines.

Note: These are reasonable ethical guidelines, not significant commercial barriers

Code assistance in commercial products where you want clear provenance and ethical guidelines. The OpenRAIL-M license is permissive for standard commercial software development.

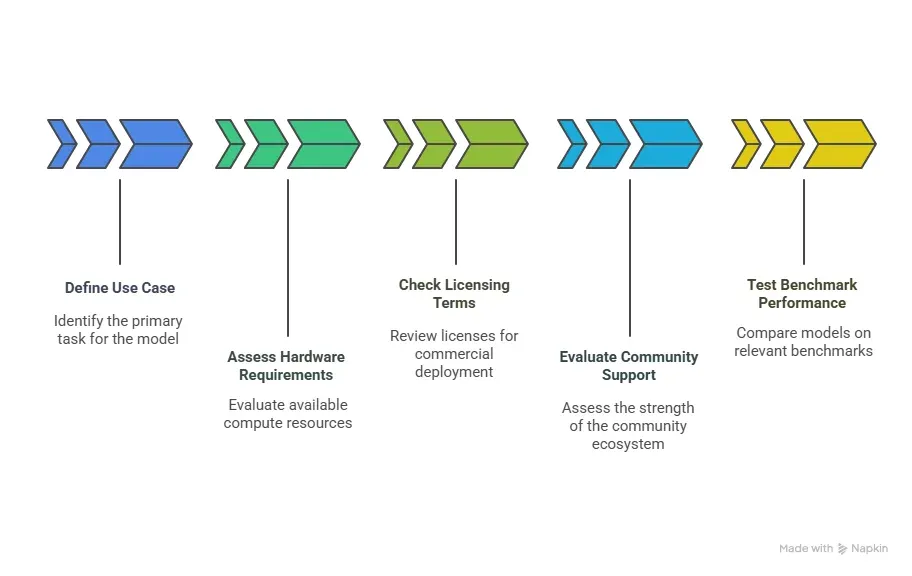

Selecting the optimal model requires evaluating your specific requirements, infrastructure, and deployment constraints. Consider these critical factors before implementation.

Identify your primary task, like text generation, image creation, code assistance, or multimodal processing. Models specialize in specific domains, so matching capabilities to requirements ensures optimal performance.

Evaluate your available compute resources. Consumer GPUs with 24GB VRAM handle quantized 40B models, while larger models require multiple high-end GPUs or cloud deployment for acceptable performance.

Review licenses carefully before commercial deployment. Apache 2.0 permits unrestricted use, while some models require approval or prohibit commercial applications without explicit agreements.

Strong community ecosystems provide fine-tuning recipes, integrations, and troubleshooting resources. Models with active communities on HuggingFace and GitHub offer better long-term support and documentation.

Compare models on relevant benchmarks like MMLU, HumanEval, or VQA, depending on your task. However, conduct real-world testing since benchmarks may not reflect actual deployment performance.

Folio3 AI deploys and fine-tunes open-source generative AI models on your own infrastructure - no vendor lock-in, no recurring API costs.

Explore Our Generative AI ServicesOpen-source models provide significant advantages for organizations seeking control, customization, and cost efficiency. These benefits drive increasing enterprise adoption across industries.

Local deployment ensures sensitive data never leaves your infrastructure. Healthcare, finance, and legal sectors particularly benefit from processing confidential information without third-party exposure.

Eliminate recurring API fees by hosting models locally. After initial infrastructure investment, operational costs remain predictable regardless of usage volume, improving long-term ROI.

Fine-tune models on proprietary datasets for domain-specific performance. Organizations can optimize outputs for their exact terminology, formats, and quality standards without vendor limitations.

Access to model architecture and training data enables bias detection and compliance verification. Regulated industries can demonstrate AI governance and explain model decisions to stakeholders.

Switch between models freely as better alternatives emerge. Organizations maintain strategic flexibility without contractual obligations or migration penalties from proprietary providers.

Despite their advantages, open-source models present challenges that organizations must address. Understanding these limitations enables better planning and resource allocation.

Larger models demand significant GPU memory and processing power. Running state-of-the-art models locally requires substantial hardware investment that may exceed smaller organizations' budgets.

Community-driven development lacks guaranteed support response times. Organizations must rely on forums, documentation, and internal expertise for troubleshooting critical production issues.

Self-hosting transfers security obligations to your team. You must implement access controls, monitor vulnerabilities, and maintain compliance without a vendor security infrastructure.

Many open-source models lack content moderation and safety features. Organizations must implement their own filtering systems for harmful outputs, bias mitigation, and alignment controls.

Deploying models in production requires MLOps expertise for scaling, monitoring, and maintenance. Teams without specialized skills face steep learning curves compared to turnkey API solutions.

The open-source AI ecosystem continues evolving rapidly with innovations that narrow the gap with proprietary systems. These trends will shape the landscape through 2026 and beyond.

Research focuses on achieving larger model performance with fewer parameters. Techniques like distillation and quantization enable deployment on consumer hardware without sacrificing quality.

Models increasingly process text, images, audio, and video within unified architectures. This convergence enables richer applications from autonomous agents to comprehensive content creation.

Optimized models run directly on devices for privacy and low latency. Mobile phones, embedded systems, and IoT devices gain access to sophisticated AI without cloud connectivity.

Chain-of-thought and deliberative reasoning approaches enhance problem-solving abilities. Open-source reasoning models like DeepSeek-R1 demonstrate competitive performance on complex tasks.

Professional deployment frameworks, monitoring solutions, and governance tools mature rapidly. Organizations gain production-ready infrastructure previously available only with proprietary platforms.

As a trusted generative AI development partner, Folio3 AI delivers end-to-end solutions that help enterprises accelerate innovation, streamline operations, and achieve measurable business outcomes across industries.

We design and build custom generative AI models fine-tuned to your data, industry, and specific use cases. Our models deliver accuracy, scalability, and tangible business value across text, visuals, and complex datasets.

We embed generative AI capabilities into your existing IT ecosystem, including CRM, ERP, and proprietary platforms. Our integration approach ensures minimal workflow disruption while maximizing operational efficiency and system performance.

Our specialists craft optimized prompts tailored to your enterprise applications, ensuring consistent, relevant, and high-quality AI outputs. This results in improved model performance and reliable, repeatable results.

Strengthen your internal capabilities with our experienced MLOps specialists. We manage model deployment, monitoring, scaling, and ongoing optimization to keep your AI systems production-ready and performing at peak efficiency.

We automate repetitive coding tasks using AI-driven tools, accelerating development cycles and reducing manual effort. This improves code quality while freeing your teams to focus on strategic initiatives.

From model selection and fine-tuning to MLOps and production deployment, Folio3 AI handles the full lifecycle, so your team focuses on outcomes, not infrastructure.

Get an Expert ConsultationLLaMA 4 and Qwen 2.5 offer the strongest general-purpose performance with broad language support and reasoning capabilities. Both models provide multiple size variants for different hardware constraints.

Yes, models like LLaMA 4 405B and Qwen 2.5 72B match or exceed GPT-4 on many benchmarks. The performance gap has narrowed significantly, with open-source excelling in specific domains.

Requirements vary by model size, 7B models need 8-16GB VRAM, 70B models require 40-80GB. Quantization techniques can reduce requirements by 50-75% with minimal quality loss.

Licensing varies by model. Apache 2.0 licensed models like Mistral 7B allow unrestricted commercial use, while others like LLaMA require acceptance of specific terms or have usage restrictions.

DeepSeek Coder V2 and Qwen3-Coder lead coding benchmarks with support for 300+ languages. StarCoder2 and CodeLlama offer excellent alternatives with different licensing terms.

Tools like Ollama, LM Studio, and vLLM simplify local deployment. Download model weights from HuggingFace, then use these frameworks to run inference without complex infrastructure setup.

FLUX.1 currently leads in image quality and prompt adherence with 12B parameters. Stable Diffusion 3.5 offers a mature ecosystem with extensive customization options and broader community support.

Yes, most open-source models support fine-tuning with techniques like LoRA, requiring minimal resources. This enables domain-specific optimization for healthcare, legal, finance, and other specialized applications.

Multimodal models process multiple input types, text, images, audio, and video, within a single architecture. They enable applications like visual question answering, document analysis, and GUI automation.

Local deployment keeps all data within your infrastructure with no external API calls. This ensures complete control over sensitive information and compliance with data protection regulations.

Custom generative AI helps businesses increase ROI by improving efficiency, reducing operational costs, and delivering more tailored, scalable outcomes in 2026.

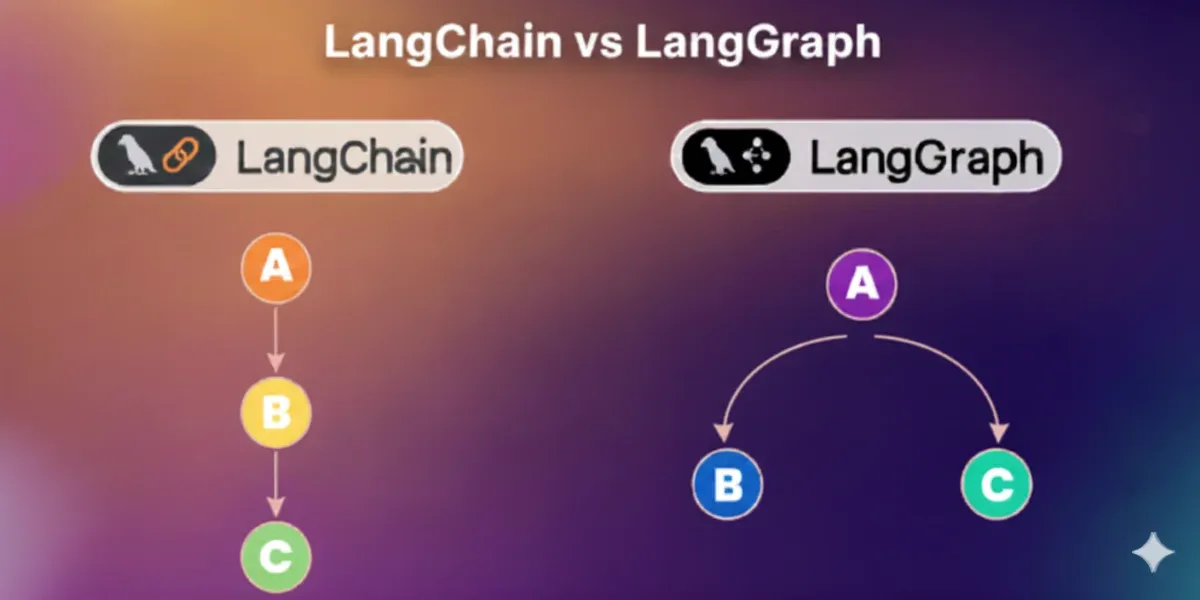

A practical guide to building scalable generative AI architecture for the enterprise, covering infrastructure, security, orchestration, and governance.